Table of Contents >> Show >> Hide

- What You’ll Learn

- Implementing vs. Testing: The Difference Is a Workflow

- Pick the Right First Use Cases (Hint: Not “Everything”)

- The AI Toolkit for Independent Agencies

- A Practical Implementation Roadmap (Built for Agencies, Not Lab Coats)

- Guardrails That Keep You Innovative (and Insurable)

- Privacy + confidentiality: don’t feed the machine sensitive data

- Accuracy + hallucinations: trust, then verify, then verify again

- Contracts + carrier restrictions: read the fine print before your AI does

- Regulatory awareness: states are paying attention to AI in insurance

- A simple governance framework that won’t overwhelm you

- Vendor Questions That Save You From Future Headaches

- Measuring Impact Without Guessing

- Common Missteps (and How to Dodge Them)

- Wrapping It Up: AI Should Make Your Agency More Human

- Extra: of “What It Really Feels Like” When Agencies Implement AI

- SEO Tags

AI in an independent insurance agency isn’t a sci-fi leapit’s a practical (and sometimes hilariously imperfect) way to remove busywork so your team can do more

“hero work”: advising clients, strengthening renewals, and building relationships. In IA Magazine’s “Exploring AI” conversation about implementing tools,

the theme is clear: start small, earn team buy-in, and weave AI into the workflows you already trustnot into a separate “AI island” nobody visits.

This guide turns that idea into a hands-on playbook. We’ll walk through which AI tools actually help agencies, how to implement them without creating new

E&O exposures, what to ask vendors, and how to measure impact so “we tried AI” becomes “we improved our operation.”

Implementing vs. Testing: The Difference Is a Workflow

“Testing AI” is when someone opens a chatbot, pastes a prompt, and says, “Whoa, it wrote an email.” Fun. But implementing AI means:

(1) picking a repeatable process, (2) defining what data is allowed, (3) training the team on consistent steps, and (4) tracking results.

Here’s the simplest way to tell the difference: if AI output isn’t landing somewhere your agency already worksyour AMS, CRM, ticketing system,

marketing platform, or documented SOPit’s probably just a demo with good vibes.

Pick the Right First Use Cases (Hint: Not “Everything”)

Agencies get stuck when they start with technology instead of friction. The fastest wins come from high-volume, low-drama taskswork that repeats

all day but doesn’t require a licensed brain to be brilliant every time.

High-impact, low-risk use cases to start with

- Email drafting + polishing: follow-ups, renewal check-ins, appointment reminders, coverage review invitations.

- Call/meeting summaries: turn producer calls into clean notes and next steps (with human review before filing).

- Document triage: classify inbound docs (ACORD forms, loss runs, COI requests), route them, and flag missing info.

- Marketing content scaffolding: campaign outlines, subject lines, social post variations (always fact-check and brand-check).

- Internal knowledge search: “Where’s the SOP for certificates?” “What’s our renewal timeline?” “Who owns this carrier appetite?”

Use cases to delay until you have stronger guardrails

- Coverage advice automation: anything that could be interpreted as binding coverage or guaranteeing outcomes.

- Client-facing chatbots on coverage questions: powerful, but easy to mislead without tight controls and approved knowledge sources.

- Underwriting “decisions”: AI can support submissions, but humans must own decisionsespecially in regulated contexts.

A good first project should feel boring in the best way: obvious, measurable, and easy to repeat. If your first AI initiative sounds like a keynote title,

it’s probably too big.

The AI Toolkit for Independent Agencies

“AI tool” can mean a lot of things. In agencies, the most sustainable implementations tend to be the ones embedded in systems you already usebecause

they reduce copy/paste behavior, keep data inside approved environments, and fit how people actually work.

1) AI built into your agency platforms

Many agencies are seeing AI features appear directly inside agency management systems and related platforms: workflow assistance, document extraction,

reconciliation support, and book-of-business insights. When AI is embedded, it can feel less like “another app” and more like a helpful layer on top of

existing processes.

Example capabilities you’ll increasingly see in platform-embedded AI:

- Risk/renewal intelligence: surfacing potential coverage gaps and upsell/cross-sell opportunities from existing account context.

- Automated extraction + autofill: pulling key fields from documents and placing them into your AMS forms.

- Reconciliation support: ingesting carrier statements and matching them to policies/plans with audit-friendly tracking.

2) Document intelligence (a.k.a. “Stop Re-typing PDFs”)

In many agencies, the real time thief isn’t writingit’s retyping. Document AI focuses on extracting structured fields from messy inputs:

scanned PDFs, emailed statements, forms, loss runs, endorsements, and attachments.

A smart approach is to start with one document type that’s frequent and predictable (like standard carrier statements, benefit plan docs, or recurring

forms) and measure time saved per transaction.

3) Communications + marketing copilots

AI can speed up communication work without touching sensitive dataif you keep it on a tight leash. Use it to draft structure, tone, and options:

subject lines, outreach sequences, renewal reminders, and reputation management prompts.

The agency still owns the truth. The AI can help you say it faster.

4) Meeting transcription + summarization

Producers and account managers live in conversations, but agencies often struggle to convert conversations into clean documentation. Transcription and

summarization tools can help create call notes, action items, and follow-up drafts.

Key rule: treat summaries like a first draft. A human must verify accuracy before notes are stored or used to serve a client.

5) “Agency knowledge” assistants

Some of the best ROI comes from internal AI that answers questions using your approved documents: SOPs, carrier appetite guides, training manuals,

checklists, and client service standards. This reduces interruptions (“Hey, where do I find…?”) and speeds training for new hires.

A Practical Implementation Roadmap (Built for Agencies, Not Lab Coats)

Step 1: Name one painful process

Pick a workflow that meets three criteria: high volume, repeatable, and currently annoying. Examples: endorsement intake, COI requests, renewal outreach,

statement reconciliation, or inbound email triage.

Step 2: Define “allowed data” before anyone starts prompting

Implementation breaks when staff unknowingly paste sensitive info into the wrong tool. Establish clear rules on what can and can’t be used:

personally identifiable information (PII), credentials, carrier-provided proprietary data, and anything restricted by contract should be treated as off-limits

unless you’ve approved a tool with appropriate protections.

Step 3: Build a tiny “prompt kit” (yes, really)

Your team doesn’t need 300 prompts. They need 5–10 good ones tied to real tasks, such as:

- “Draft a renewal check-in email in a professional, friendly tone. Keep it under 140 words.”

- “Turn these bullet notes into a client-facing recap with next steps.”

- “Rewrite this message for clarity and remove any absolute statements.”

- “Create three subject lines focused on value and reassurance.”

Standard prompts reduce inconsistent output and help you build repeatable quality.

Step 4: Pilot with a small, curious group

Choose a handful of early adopters (producers, account managers, ops) and run a 2–4 week pilot with one defined workflow. Keep it small enough that

people can succeed, but real enough that it affects daily work.

Step 5: Integrate into existing workflows

If the pilot requires staff to open three separate tools, your adoption will crater. The win is when AI output flows naturally into the tools and steps

people already followtemplates, checklists, ticket queues, and documentation routines.

Step 6: Write the “human-in-the-loop” rule into the SOP

Put it in writing: AI can draft, summarize, classify, and suggest. People approve, verify, and send. This single decision prevents a lot of “oops”

moments that become E&O nightmares.

Guardrails That Keep You Innovative (and Insurable)

AI can create real risk when agencies treat it like an all-knowing expert. The safer framing is: AI is a junior assistant who works fast, speaks confidently,

and occasionally makes things up. Your agency supplies the judgment.

Privacy + confidentiality: don’t feed the machine sensitive data

Many free or low-cost tools have one-sided terms and unclear data handling. An acceptable-use policy should explicitly limit what staff can input,

especially PII, proprietary information, credentials, and carrier-restricted data.

Accuracy + hallucinations: trust, then verify, then verify again

AI can produce “hallucinations”plausible-sounding claims that are simply false. In an agency context, this can look like incorrect coverage descriptions,

wrong requirements, or invented carrier rules. Make review mandatory before AI output becomes client-facing.

Contracts + carrier restrictions: read the fine print before your AI does

Carrier agreements may limit how carrier data is used, including limitations on sharing data with third parties. Vendor AI tools can count as a third party.

Your implementation plan should include a quick contract check so you’re not accidentally violating appointment agreements.

Regulatory awareness: states are paying attention to AI in insurance

Even if your agency isn’t building underwriting models, regulators are increasingly focused on AI governance in insuranceespecially around transparency,

risk management, and unfair discrimination. The practical takeaway for agencies is simple: document your process, use approved tools, and maintain audit trails.

A simple governance framework that won’t overwhelm you

- Approval: which tools are allowed, for which tasks.

- Data rules: what may be entered, stored, or shared.

- Human review: who signs off on outputs and where it’s documented.

- Monitoring: periodic spot checks for accuracy, tone, and compliance.

- Training: onboarding for prompts, risks, and safe usage.

Vendor Questions That Save You From Future Headaches

Vendor conversations can sound like a parade of buzzwords. To keep it grounded, focus on fit, integration, security, and accountability.

Industry checklists and “smart questions” frameworks can help agencies compare vendors without relying on vibes.

Must-ask questions (agency edition)

- Workflow fit: What agency problems does this solve, specifically?

- Integration: Does it connect to our AMS/CRM, or will we be copy/pasting forever?

- Data handling: Is our data used for training? How is it stored? How is it deleted?

- Security + auditability: Do we get logs, role-based access, and exportable records?

- Human-in-the-loop controls: Can outputs be reviewed/approved before sending or filing?

- Accuracy claims: How do you test quality? What error rates have you observed?

- Support: What training and implementation help do you provide?

If a vendor can’t clearly explain how they prevent data leakage and misuse, that’s not “innovative”that’s “expensive later.”

Measuring Impact Without Guessing

AI implementation should improve outcomes you already care about. Pick a few metrics and track them before and after the pilot.

Useful agency metrics

- Cycle time: time from request to completion (endorsements, COIs, renewals).

- Touches per transaction: how many steps/handoffs happen before done.

- Quality checks: error rates, rework, missing info, corrections needed.

- Client responsiveness: response time and satisfaction indicators.

- Capacity reclaimed: hours saved per week and where that time goes.

The goal isn’t “AI output volume.” The goal is fewer bottlenecks, cleaner documentation, faster service, and more time for revenue and retention work.

Common Missteps (and How to Dodge Them)

Misstep 1: Shadow AI

If you don’t give staff safe, approved options, they’ll still use AIjust quietly. Bring usage into the open, publish rules, and provide sanctioned tools.

Misstep 2: Letting AI “answer coverage questions” unchecked

AI can draft explanations, but coverage is contextual. Build a rule that anything interpretive is reviewed by a qualified human before it’s sent.

Misstep 3: Buying a tool before you define success

If you can’t describe your success metrics in one sentence, you’re shopping for technology as entertainment. Decide what you want to improve first.

Misstep 4: Training once and hoping forever

AI use changes fast. Update your prompt kit, refresh the acceptable-use policy, and run periodic quality audits. This is a living program, not a one-time install.

Wrapping It Up: AI Should Make Your Agency More Human

The best AI implementations don’t replace relationshipsthey protect them. When AI reduces retyping, improves documentation, and speeds routine communication,

your team can spend more time advising clients and less time battling inbox chaos.

Start small. Win trust. Integrate into your workflows. Measure what changed. Then scale thoughtfully. That’s how implementing AI tools becomes a competitive advantage

instead of a chaotic experiment.

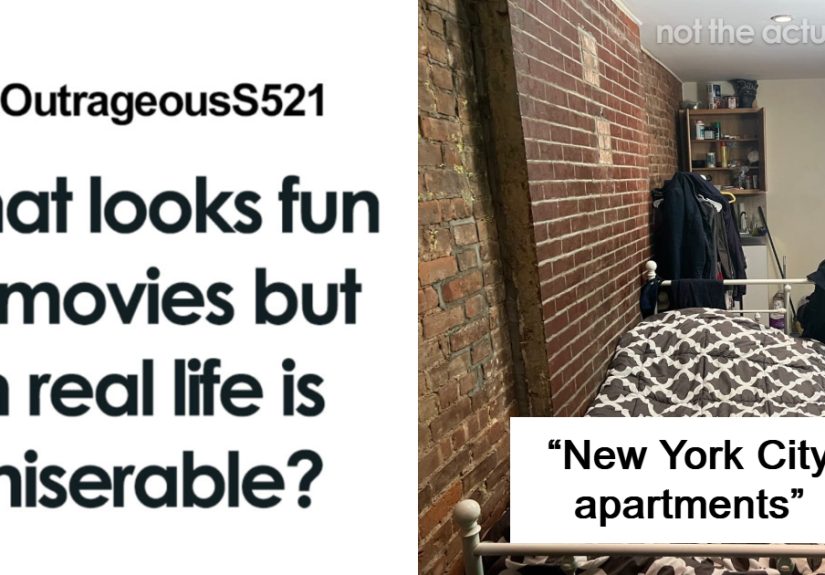

Extra: of “What It Really Feels Like” When Agencies Implement AI

Here’s the part most AI discussions skip: the emotional weather. In the first week of implementation, teams often split into three camps:

the “This is awesome!” group, the “This is terrifying!” group, and the “I’m ignoring this until it goes away” group. All three are normal.

The trick is building a rollout that lowers fear without lowering standards.

Early wins usually come from communication tasks because they’re visible and satisfying. Someone uses AI to polish a renewal email, and suddenly the team

sees the point: faster drafts, clearer tone, fewer mental context switches. But week two is where reality shows up. People notice that AI sometimes invents

details, misses nuance, or confidently suggests something that would make a carrier underwriter spit out their coffee. That’s when the agency’s “human-in-the-loop”

rule stops being a slogan and becomes a safety feature.

Another common experience is “prompt drift.” At first, everyone uses the same prompt. Then everyone “improves it,” and suddenly the agency has 27 versions of

“write a follow-up email” with wildly different results. The fix is simple: keep a shared prompt kit and treat it like a living SOP. When someone improves a prompt,

update the kit instead of letting it become tribal knowledge.

Then comes the data questionusually prompted by a well-meaning employee pasting something they shouldn’t. Agencies that implement smoothly don’t rely on

“common sense” boundaries; they write them down. Staff appreciate clarity. “Don’t paste PII, credentials, or carrier-restricted data into unapproved tools”

removes ambiguity and prevents accidental risk. It also reduces the awkward situation where leadership has to say, “So… about that thing you did last Tuesday.”

By the end of the first month, the most successful agencies report a surprising shift: AI doesn’t just save timeit changes how people think about work.

Teams start asking, “Should we be doing this manually at all?” That question leads to better workflows, better templates, and better documentation habits.

Ironically, implementing AI often results in fewer shortcuts, because agencies realize consistency matters more when automation is involved.

Finally, there’s the “trust moment.” It usually happens when someone compares two weeks of results and sees hard proof: quicker turnaround, fewer errors,

better notes, or more consistent outreach. At that point, AI stops being a novelty and becomes infrastructurelike email, the AMS, or the phone system.

That’s the real milestone: not that your agency “uses AI,” but that your agency uses it responsibly, repeatedly, and with measurable improvement.