Table of Contents >> Show >> Hide

- Defaults Aren’t Neutral: The First Setting Becomes the “Normal”

- What “Private By Default” Should Actually Mean

- Why Public Profiles Are a Bad Default for Kids

- We Already Know This Works: Platforms Have Started Doing It

- Why Social Networks Should Want This (Yes, Even From a Business Perspective)

- A Smart, Practical Blueprint for Private-by-Default Kids’ Accounts

- But What About Creativity, Visibility, and Opportunities?

- What Parents and Caregivers Can Do Right Now (Even Before Platforms Catch Up)

- Real-World Experiences: What Private-by-Default Could Prevent (and Improve)

- Conclusion: Make Safety the Default, Not the Homework

Privacy isn’t a “nice-to-have” for kids onlineit’s the seatbelt. And right now, a lot of social networks still treat privacy like a fancy add-on you can buy with time, patience, and three separate settings menus. That’s backwards. If a platform knows a user is a minor, the platform should start that account private by defaultand only allow a switch to public with clear explanations, real friction, and age-appropriate safeguards.

Why? Because defaults shape behavior. Most people (adults included) don’t change settings. Kidswho are still learning how to spot risks, manage attention, and understand long-term consequencesare even less likely to tinker with privacy controls. If the “starter pack” is public, the internet gets a front-row seat to a kid’s photos, comments, friend list, habits, and digital footprint. If the starter pack is private, kids can still create, connect, and explorejust with the doors not flung open to the entire world.

Defaults Aren’t Neutral: The First Setting Becomes the “Normal”

When a kid signs up for a social platform, the first few minutes matter. That’s when they pick a username, upload a profile photo, follow friends, and post something (often quicklybecause the app is designed to reward momentum). If the account is public by default, they may share before they understand what “public” really means in practice: searchable profiles, shareable posts, and visibility far beyond classmates.

Private-by-default flips the script in a healthy way. It gives kids time to learn the culture of a platform, build a trusted circle, and understand features like comments, tagging, duets/stitches/remixes, and direct messagesbefore strangers can interact freely. This is not about locking kids out of the internet. It’s about making sure the internet doesn’t immediately walk into kids’ rooms without knocking.

What “Private By Default” Should Actually Mean

Some platforms say “private” but still leave side doors open. A real private-by-default standard for kids’ accounts should cover more than a single toggle. Here’s what it should include.

1) Profile visibility and follower approval

- Only approved followers can see posts, stories, likes, and follower/following lists.

- Follower requests require explicit approval (no “auto-accept” suggestions for minors).

- Follower removals and blocking tools should be easy to find and use.

2) Tight controls on contact from strangers

- Direct messages should be limited to friends or followers a kid follows back (and for younger teens, messaging should be restricted by default).

- Commenting should default to friends/followers, not “everyone.”

- Tagging, mentions, and replies should default to friends/followers only.

3) Reduced “re-share” and remix features by default

- Features that let others reuse content (downloads, stitches, duets, remixes, reposts) should default off for minors.

- Clear explanations should appear before a minor enables reuse.

4) Lower discoverability (the “don’t suggest me to the entire universe” setting)

- Search engine indexing should be off for minors by default.

- “Suggest your account to others” settings should default off.

- Contact syncing and “people you may know” should be minimized for minors.

5) Stronger protections against data-driven amplification

- Minors should get the most privacy-protective data settings by default (especially around ad targeting and personalization).

- Platforms should provide age-appropriate explanations of what data is collected and how it’s used.

Why Public Profiles Are a Bad Default for Kids

Public-by-default accounts create risk in four big buckets: unwanted contact, reputational harm, data exposure, and “scale.” Kids don’t just face the same risks as adultsthey face them with less leverage and fewer resources to respond.

Unwanted contact becomes a feature, not a bug

Public profiles invite interactions from people outside a kid’s real-life community. Some interactions are harmless. Some are not. The problem is that kids often can’t reliably tell which is which in real timeespecially when messages feel flattering, urgent, or “friendly.” Private-by-default reduces that exposure by narrowing who can reach a kid in the first place.

Cyberbullying and harassment scale faster when profiles are public

When accounts are public, a conflict can jump from “a few classmates” to “anyone with an opinion and Wi-Fi.” Pile-ons, mocking comments, and unwanted attention get easier when strangers can find a profile instantly. Private-by-default adds a speed bump that helps keep teen drama from becoming public entertainment.

Digital footprints are permanent-ish, even when you delete

Kids experiment. That’s normal. But online, experimenting can leave receiptsscreenshots, shares, quote-posts, reposts, and copies living on other accounts. Private-by-default doesn’t eliminate that reality, but it significantly reduces how quickly content can escape into wider circulation.

Public accounts are data-rich targets

Public profiles can reveal patterns: schools, routines, friend groups, hobbies, locations, and interests. Even without obvious identifiers, small clues can stack up. Private-by-default reduces how much of that “pattern data” is visible to people who don’t need it.

We Already Know This Works: Platforms Have Started Doing It

This isn’t a wild sci-fi proposal. The industry has already shown private-by-default is both possible and compatible with popular platforms.

Instagram’s move toward “Teen Accounts”

Meta has described “Teen Accounts” on Instagram as including built-in protections such as private accounts by default for younger teens and restrictions that shape who can contact them and what content they see. The key idea is simple: when teens start, they start saferand the app doesn’t assume “public” is the right baseline.

TikTok’s under-18 privacy and safety defaults

TikTok’s published settings for users under 18 describe default private accounts for teens and additional limits (such as reduced reuse features and messaging restrictions for younger teens). Again, the pattern is clear: safer defaults first, customization later.

If major platforms can do this for some users, they can do it consistently across the board. The question isn’t “can it be done?” It’s “why isn’t it universal?”

Why Social Networks Should Want This (Yes, Even From a Business Perspective)

Private-by-default is often framed as a sacrifice platforms must make. But it can be a win for platforms tooif they’re willing to prioritize long-term trust over short-term growth hacks.

- Lower risk, fewer crises: Safer defaults reduce incidents that lead to bad press, lawsuits, and regulatory attention.

- Stronger parent trust: Parents are more willing to allow social media when they feel the platform isn’t setting traps.

- Better quality engagement: When kids interact mostly with known peers, engagement can be healthier and more meaningful.

- Clearer compliance posture: U.S. child privacy rules and rising state-level expectations make “privacy by design” the safer direction to travel.

Also, let’s be honest: platforms already use “defaults” to steer behavior. The Federal Trade Commission has highlighted how interface designs can nudge users into sharing more data than they intended. If platforms can engineer friction to keep people scrolling, they can engineer friction to keep kids safer.

A Smart, Practical Blueprint for Private-by-Default Kids’ Accounts

Making kids’ accounts private by default doesn’t mean turning social media into a digital library with a strict “no talking” policy. It means designing for real youth development and real risk patterns. Here’s a blueprint that could work across platforms.

Make “private” the default for all minors, with layered protections by age

- Under 16: Private by default + strongest limits on messaging and content reuse.

- 16–17: Private by default + clearer choices to go public, with in-app education and friction.

Build an “Explain It Like I’m 14” privacy flow

Before a teen flips public, the platform should show a short, plain-language explanation of what changes:

searchable visibility, who can message, who can comment, whether content can be reused, and where posts can appear (including outside the platform).

No legalese. No ten-screen scroll. Just the truth.

Add friction to high-risk switches

Not all settings are equal. Turning on public visibility, enabling content downloads, or allowing “everyone” to comment should require an extra steplike a confirmation screen and a brief reminder of consequences. Friction isn’t punishment; it’s protection.

Make safety controls easier than filters

If a platform can make it easy to add a “golden-hour glow” to a selfie, it can make it easy to:

block, restrict, report, mute, review followers, and control comments.

Safety tools should be front-and-center, not hidden behind a scavenger hunt.

Limit aggressive discoverability for minors

Kids don’t need to be “growth hacked.” Minors should not be auto-suggested broadly, and their accounts should not be designed to be easily found through contact matching or external search by default.

But What About Creativity, Visibility, and Opportunities?

This is the most common pushback: “If kids are private, they can’t build an audience.”

Two things can be true at once:

Kids can be talented and public exposure can be risky.

Private-by-default doesn’t ban public creative work. It simply prevents accidental public exposureespecially the kind that happens because the default was public and nobody realized what that meant.

Platforms can also create safer “public-ish” options for youth creators, such as:

limited public portfolios, moderated discovery spaces, or public posting that restricts contact features (for example: public posts with friends-only comments and messages). A teen can share art publicly without also opening the door to every stranger with a keyboard.

What Parents and Caregivers Can Do Right Now (Even Before Platforms Catch Up)

Until every platform adopts private-by-default for minors, families can still reduce risk with a quick, repeatable routine.

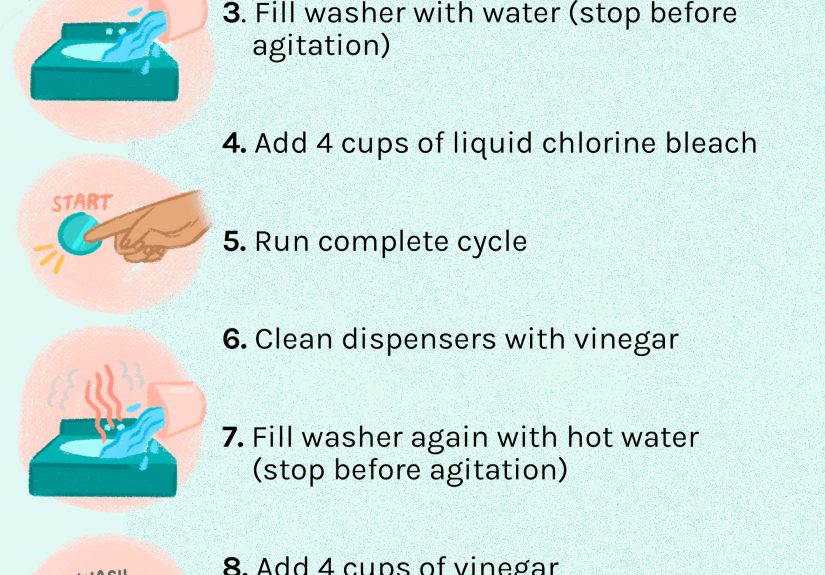

The 10-minute privacy check

- Set the account to private.

- Limit messages to friends/followers only (or turn off message requests where possible).

- Restrict comments, mentions, and tags to friends.

- Turn off content reuse/downloads/remixes where possible.

- Turn off “suggest my account” and contact syncing.

- Review followers togetherremove anyone the teen doesn’t recognize.

- Agree on a simple rule: “No posting school names, schedules, or real-time locations.”

Importantly, make it collaborative. Privacy shouldn’t feel like surveillance. Frame it as confidence:

“We’re setting you up so you can enjoy the platform without random chaos.”

Real-World Experiences: What Private-by-Default Could Prevent (and Improve)

The strongest arguments for private-by-default often come from everyday storiesmessy, normal, and surprisingly common. Not horror stories. Just the kind of “wait, that happened?” moments that show how fast things can spiral when the default is public.

Experience #1: The accidental audience. A middle schooler posts a funny clip meant for friends. Because the account is public (and because the app’s discovery features are doing their job), the clip gets pushed outside the friend group. Suddenly the comments aren’t inside jokesthey’re strangers arguing, teasing, and nitpicking. The kid didn’t “choose” a public audience. They inherited one from a default setting. Private-by-default would have kept that first post in a smaller circle until the kid was ready for broader visibility.

Experience #2: The “friend of a friend” problem. A teen accepts follow requests because they recognize a namekind of. The person turns out to be loosely connected: a friend’s older sibling’s friend, or someone from a neighboring school. Nothing dramatic happens, but the teen’s posts are now visible to someone outside their trusted group. Then that person’s friends show up. Visibility grows outward like a ripple, and it’s hard to reverse because the teen doesn’t want to be “rude.” Private-by-default doesn’t eliminate social pressure, but it slows the ripple and makes approval feel like a normal checkpointnot an awkward exception.

Experience #3: The searchable footprint. A kid uses their real first and last name as a username because it feels “grown-up” and professional. Their profile photo shows a team jersey. Their bio mentions their school mascot. None of this seems dangerous in isolation. But it’s enough to connect identity dots. Private-by-default paired with age-appropriate prompts (“Use a nickname,” “Avoid school identifiers,” “Here’s why”) can prevent kids from accidentally turning their profile into a mini directory listing.

Experience #4: The remix chain reaction. On some platforms, public accounts may allow others to reuse contentremix it, stitch it, download it, or share it elsewhere depending on settings. A teen posts something harmless, but it gets repackaged with a sarcastic caption or stitched into someone else’s content. Now the teen is starring in a storyline they didn’t write. The kid’s original post wasn’t the problem; the default reuse pathways were. Private-by-default with reuse disabled prevents a lot of “my video ended up where?” moments.

Experience #5: The family trust gap. Some parents respond to online risk by tightening control: checking phones constantly, demanding passwords, or banning platforms outright. Teens respond by hiding accounts or using workarounds. Everyone loses. Private-by-default changes the family dynamic because the platform itself starts in a safer mode. That makes it easier for families to focus on conversationshow to handle requests, how to block, how to respond to negativityinstead of policing every tap. The goal isn’t to watch kids; it’s to support them.

These experiences have a common thread: most kids weren’t chasing danger. They were chasing connection. A default setting shouldn’t turn that normal desire into unnecessary risk.

Conclusion: Make Safety the Default, Not the Homework

Kids deserve social spaces online that are designed with their reality in mind: still learning, still growing, still figuring out boundaries. Private-by-default kids’ accounts is one of the simplest, most effective design choices platforms can makebecause it reduces exposure before anything goes wrong, and it doesn’t require kids to become privacy experts on day one.

Social networks love to say they’re building community. Great. Communities have doors, not open loading docks. Make kids’ accounts private by defaultthen let teens choose visibility intentionally, with real understanding and real guardrails.