3 hours ago 0

Winter exposes one of America’s harshest policy failures: too many homeless people must choose between the danger...

Discover Interesting Facts About Life

Stuck between a virus and a cold place: A choice for homeless Americans awaits

Stuck between a virus and a cold place: A choice for homeless Americans awaits  ‘The Rookie’ Season 8: Everything Fans Should Know

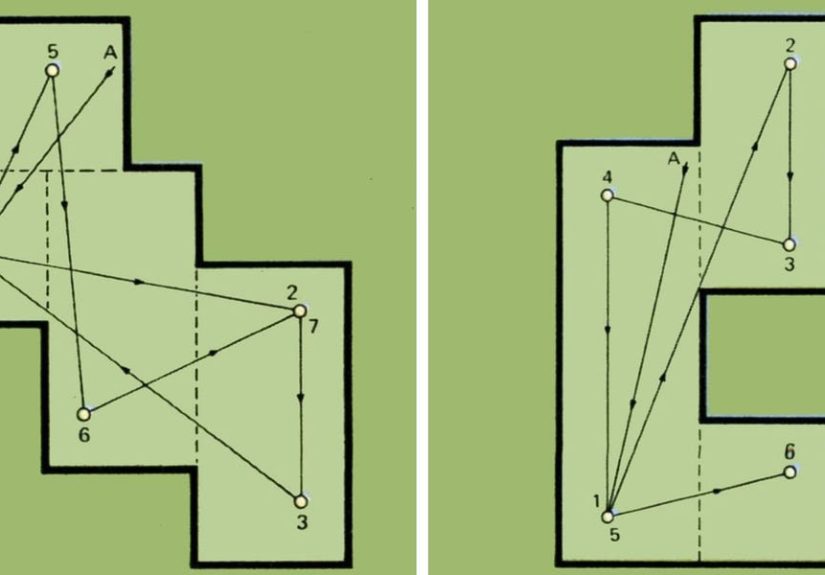

‘The Rookie’ Season 8: Everything Fans Should Know  Backyard Putting Green Plans – DIY Putting Green

Backyard Putting Green Plans – DIY Putting Green  IS Podcast: How Age and Gender Affect Schizophrenia Symptoms

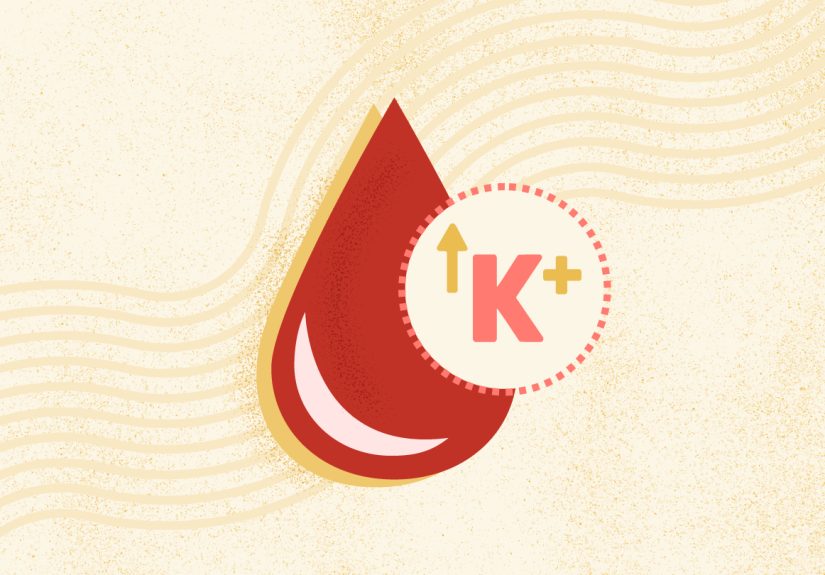

IS Podcast: How Age and Gender Affect Schizophrenia Symptoms  What Is Hyperkalemia? Symptoms, Causes, Diagnosis, Treatment, and Prevention

What Is Hyperkalemia? Symptoms, Causes, Diagnosis, Treatment, and Prevention