Table of Contents >> Show >> Hide

- Why This Gesture-Controlled Robot Arm Build Stands Out

- What Students Actually Learn From a Project Like This

- The Educational Value Goes Beyond Robotics

- Why Gesture Control Makes Robotics More Engaging

- What Makes a Build Like This Work Well in the Classroom

- Challenges That Make the Project Even More Educational

- Specific Examples of How This Project Can Grow

- Why Builds Like This Matter in 2026 and Beyond

- Hands-On Experience: What Building One Actually Feels Like

- Conclusion

Some projects are useful. Some are flashy. And then there are the rare builds that manage to be both while also sneaking in a full engineering lesson before anyone notices. A gesture-controlled robot arm belongs squarely in that last category. At first glance, it looks like a fun maker project: wave your hand, the arm moves, everyone in the room makes their best “Jedi training” face, and the demo earns instant cool points. But underneath the wow factor is a surprisingly rich educational platform.

This kind of build teaches far more than “robot arm goes brrr.” It introduces students and hobbyists to sensors, embedded programming, motion control, communication between devices, calibration, troubleshooting, and the essential truth of engineering: the first version almost never works perfectly, and that is part of the fun. In other words, a gesture-controlled robot arm is not just nifty. It is the kind of project that turns abstract STEM concepts into something you can actually see, test, and improve.

Why This Gesture-Controlled Robot Arm Build Stands Out

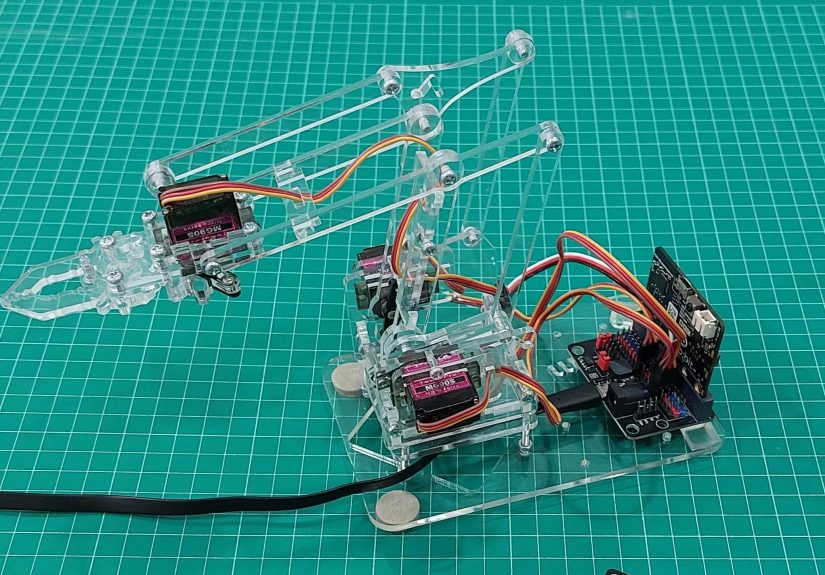

The featured concept is beautifully simple. A small robotic arm is driven by servo motors and controlled by a microcontroller. Instead of using a joystick or a row of buttons, the operator wears or holds a second controller board that detects motion and translates hand gestures into commands. Those commands are then sent wirelessly to the robot arm, which moves its base, joints, or gripper in response.

That basic idea sounds almost magical, but the hardware logic is wonderfully down to earth. A motion-sensing controller reads tilt, orientation, or specific hand positions. Software maps those inputs to actions like rotate left, lift up, lower down, or open the gripper. The receiver board interprets the incoming signal and tells the servos where to move. Suddenly, a student is not just “using a robot.” They are learning how human motion becomes data, how data becomes commands, and how commands become mechanical movement.

That chain of events is the real educational gold. It ties together physical computing, mechanics, and programming in one compact build. It also makes robotics feel approachable. A giant industrial manipulator can seem intimidating. A desk-sized gesture-controlled arm feels like an invitation.

What Students Actually Learn From a Project Like This

1. Sensors Become Less Mysterious

Many beginner electronics projects start with simple inputs like buttons, light sensors, or potentiometers. A gesture-controlled robot arm raises the stakes in a good way. Instead of pressing a button, students rely on motion data. That usually means working with an accelerometer, an inertial measurement unit, or another gesture-sensitive sensor. Once that happens, “sensor input” stops being a vague textbook phrase and becomes something students can feel with their own hands.

Tilt your wrist forward and the arm reaches. Rotate your hand and the base turns. Open and close a hand pose, and the gripper responds. That immediate feedback helps learners understand that sensors do not “know” gestures in a human sense. They measure physical changes, and code interprets those changes into meaningful actions.

2. Servo Control Finally Makes Sense

Servo motors are a robotics classic for good reason. They are compact, affordable, and well-suited to robot joints because they can move to specific positions. In a project like this, each servo usually handles one degree of motion: base rotation, shoulder movement, elbow action, or gripping. That gives students a practical way to understand how robotic arms are divided into joints and controlled one axis at a time.

Better yet, learners quickly discover that servo control is not just about plugging in a motor and hoping for the best. They need to think about angle limits, smooth movement, mechanical strain, power supply stability, and how one joint’s position affects the motion of the others. This is where a fun weekend build quietly turns into an excellent lesson in real-world robotics.

3. Wireless Communication Feels Useful, Not Abstract

One of the most charming parts of a gesture-controlled robot arm is the separation between the human controller and the machine. The controller board reads motion, then sends commands to the arm wirelessly. That could happen over built-in radio, Bluetooth, infrared, or another lightweight communication method depending on the platform.

For students, that introduces a core engineering idea: systems do not have to live on one board. Input can happen in one place, processing in another, and actuation somewhere else entirely. Once that concept clicks, they begin to think like designers of larger robotic systems, not just tinkerers wiring parts together on a desk.

4. Debugging Becomes a Skill, Not a Punishment

No honest robotics article should pretend these builds work perfectly on the first try. Sometimes the arm jitters. Sometimes the gesture thresholds are too sensitive. Sometimes the controller is tilted differently than the software expects. Sometimes a servo hums in protest like it has opinions about your wiring choices.

And that is excellent news for learning.

A gesture-controlled robot arm creates the kind of debugging experience that actually teaches students how engineers think. Is the problem mechanical, electrical, or software-based? Is the radio link dropping packets? Is the accelerometer data noisy? Is the arm trying to move beyond its physical limits? Troubleshooting a system like this forces learners to test assumptions one layer at a time.

The Educational Value Goes Beyond Robotics

Projects like this are often described as STEM builds, but that label can feel a little too neat. In reality, a gesture-controlled robot arm spills into several areas at once.

Engineering Design

Students have to think about structure, range of motion, load limits, and how to mount parts so that the arm can move without colliding with itself. Even a small acrylic or lightweight arm teaches big lessons about leverage, stability, and mechanical tradeoffs.

Computer Science

At the code level, the project becomes an exercise in logic. Raw input values must be read, filtered, mapped, and translated into motor commands. Beginners practice conditionals, variables, loops, and calibration routines. More advanced learners can explore smoothing, dead zones, and state-based control.

Math

Robotic arms are sneaky math teachers. Students deal with ranges, angles, offsets, scaling, and coordinate thinking almost by accident. If the project grows more advanced, it can naturally introduce forward and inverse kinematics, which sound intimidating until a learner realizes they are simply ways of describing where the arm is and how to get it somewhere else.

Human-Machine Interaction

This is one of the most underrated parts of the build. Gesture control forces students to think about interface design. Which gestures feel intuitive? Which ones are tiring? Which ones are likely to be misread? How much delay is acceptable before the arm feels sluggish? These are not just robotics questions. They are design questions.

Why Gesture Control Makes Robotics More Engaging

Traditional robot control methods work just fine. Buttons are reliable. Joysticks are familiar. Pre-programmed routines are powerful. But gesture control adds a layer of immediacy that changes how people relate to the machine. It feels natural. It feels physical. It feels like the robot is paying attention.

That matters in education. Students who might be lukewarm about “another coding project” often perk up when the project responds to body motion. The barrier to entry feels lower because the interaction starts with a gesture they already understand rather than a command syntax they have to memorize.

That does not mean gesture control is always the best interface. It can be less precise than sliders or joysticks, and extended mid-air control can get tiring fast. But in a classroom or maker setting, its value lies in engagement. Gesture control turns robotics into something students can inhabit, not just observe.

What Makes a Build Like This Work Well in the Classroom

Keep the Arm Small and Safe

A compact arm is ideal for education. It is cheaper, easier to power, less intimidating, and safer to use. Nobody needs a machine that can bench-press a textbook just to learn how servo mapping works. A light-duty arm is enough to demonstrate grasping, positioning, and motion control without turning the lab into a tiny factory.

Use Off-the-Shelf Components

The most teachable builds usually rely on parts students can identify and replace: hobby servos, common microcontroller boards, standard sensor modules, jumper wires, and simple structural parts. That keeps the project accessible and prevents it from becoming a mysterious sealed product. When students can name every part, they can learn from every part.

Start With Simple Gesture Mapping

It is tempting to aim for cinematic fluid motion right away. Resist the urge. A better starting point is a small set of clear commands. Tilt left, arm rotates left. Tilt right, arm rotates right. Pitch forward, gripper closes. Pitch backward, gripper opens. Once that works, students can expand the gesture vocabulary and improve the control logic.

Build in Calibration Time

Gesture-controlled systems need calibration the way coffee needs caffeine. If students do not calibrate neutral hand positions, angle thresholds, and servo limits, the project can drift from “clever” to “possessed” in a hurry. Calibration is not a boring setup step. It is part of the lesson in making real systems behave predictably.

Challenges That Make the Project Even More Educational

A good classroom build does not avoid problems. It uses them. Gesture-controlled robot arms come with several predictable challenges, and each one teaches something useful.

Noisy sensor readings: Students learn about filtering and why real-world data is messy.

Servo jitter: They discover the importance of stable power, grounding, and signal timing.

Mechanical flex: They see how material choice affects accuracy and repeatability.

Latency: They understand why communication speed and software efficiency matter.

Control mapping: They learn that the best technical solution is not always the most intuitive one for a human user.

That last point is especially important. Engineering is not only about making things work. It is about making them usable. A gesture-controlled robot arm is a perfect little laboratory for that lesson.

Specific Examples of How This Project Can Grow

Once the basic arm is working, the project can evolve in several directions.

Pick-and-Place Tasks

Students can move blocks, sort small objects by color or shape, or complete timed challenges. That turns the arm into a simple manufacturing simulator, which is a great way to discuss automation without needing an actual factory floor.

Assistive Technology Concepts

Teachers can use the build to introduce discussions about prosthetics, teleoperation, and accessible control systems. The exact classroom arm may be simple, but the design ideas behind it connect to very serious real-world applications.

Smarter Control Systems

More advanced learners can add smoothing algorithms, preset poses, object detection, or even machine vision. The original gesture interface can stay in place while the software stack becomes more sophisticated.

Comparing Control Methods

One of the best lessons is to let students compare gesture control with joysticks, buttons, or scripted motion. Which is faster? Which is more accurate? Which feels easiest to learn? Now the project becomes an experiment in interface design and robotics performance.

Why Builds Like This Matter in 2026 and Beyond

Robotics education is moving steadily toward hands-on, affordable, modular systems. That is not just a trend; it is a practical response to how students learn best. A low-cost robot arm that can be assembled from accessible parts and controlled through intuitive gestures checks many of the right boxes. It is portable, visual, multidisciplinary, and highly motivating.

Just as important, it gives students a taste of how modern robotic systems are really built: sensing, communication, actuation, planning, and user interaction all working together. That systems-level thinking is harder to teach through isolated worksheets or one-off coding drills.

And let us be honest: a robot arm you control by moving your hand is just more memorable than a lot of classroom exercises. Nobody rushes home excited to tell their family about a well-formatted spreadsheet. They absolutely will talk about the tiny robot that obeyed their wrist flick like a mechanical sidekick.

Hands-On Experience: What Building One Actually Feels Like

There is something uniquely satisfying about building a gesture-controlled robot arm from scratch because the project refuses to stay in just one lane. One minute you are dealing with code, the next you are tightening a bracket, then you are back at your computer wondering why the gripper closes every time you sneeze near the sensor. That variety is exactly why the experience sticks. It does not feel like a single lesson. It feels like a miniature engineering career squeezed onto a workbench.

In practice, the experience usually begins with overconfidence. The parts look manageable. The servos are small. The controller board promises motion data. You think, “How hard can this be?” A few hours later, you have learned a timeless robotics truth: simple-looking projects contain multitudes. The arm might move beautifully in one direction and act like a melodramatic octopus in another. The gesture thresholds may work perfectly for one person and terribly for someone else. A power issue can masquerade as a software issue, and a software issue can look suspiciously like bad mechanics. Welcome to the club.

But that is where the real value lives. Every little hiccup becomes a lesson in observation. You start noticing patterns. The arm jitters only when two servos move at once. The controller behaves differently depending on how it is mounted on the hand. A tiny adjustment to the neutral position suddenly makes the whole interface feel smarter. Those are not glamorous breakthroughs, but they are exactly the sort of insights that help students move from “following instructions” to “understanding systems.”

The most rewarding moment is rarely the first successful motion. It is the first controlled motion that feels intentional. When the arm rotates because you rotated your wrist and not because the sensor got confused by gravity, that is when the build clicks. The machine stops being a pile of parts and starts behaving like a designed object. That leap from assembly to behavior is thrilling, even on a small desk-sized robot.

Another great part of the experience is how naturally collaboration appears. One person watches the serial data, another steadies the arm, another tests gestures, and someone in the corner inevitably says, “Try changing the threshold by five degrees.” Suddenly the build is not just about robotics. It is about teamwork, communication, and learning how to test ideas without turning every problem into a dramatic blame festival. In other words, it teaches soft skills while pretending to be all about hardware.

There is also a creative side that does not get enough attention. Once the basic control works, people immediately start imagining improvements. Could the arm sort objects? Could it mimic a glove more precisely? Could it be mounted on a mobile base? Could it help teach inverse kinematics? Could it pick up marshmallows without turning them into abstract sculpture? The project invites iteration. It does not end with “it works.” It begins there.

That may be the strongest argument for this kind of build as an educational project. It rewards curiosity at every skill level. Beginners can celebrate making the arm respond at all. Intermediate makers can refine the interface and mechanical design. Advanced students can explore filtering, control logic, vision, or autonomous behaviors. The project scales with the learner, which is exactly what good educational hardware should do.

And yes, it is also just fun. That matters more than some people like to admit. Fun keeps students at the table long enough to wrestle with the difficult parts. Fun makes debugging feel like a puzzle instead of a punishment. Fun turns a robot arm from a lesson plan into a memory. A gesture-controlled robot arm may start as a nifty educational build, but the experience of making one often becomes the moment someone realizes engineering is not just useful. It is genuinely exciting.

Conclusion

A gesture-controlled robot arm is more than a flashy demo piece. It is a compact, affordable, and deeply teachable robotics platform that blends mechanics, programming, sensing, communication, and design into one memorable project. It helps learners see how physical motion becomes data, how code turns that data into action, and how even a small robot can open the door to serious engineering thinking.

That is what makes this build so clever. It meets students where they are, grabs their attention with intuitive control, and then quietly teaches them the real substance of robotics. Not bad for a machine that starts with a wave of the hand and ends with a room full of people suddenly interested in servos, sensors, and solving problems.