Table of Contents >> Show >> Hide

- Why Large Files Matter on Linux

- Option 1: Use the find Command to Search by File Size

- Option 2: Use du and sort to Find Large Directories

- Option 3: Use ncdu for an Interactive Terminal View

- Option 4: Use a Graphical Disk Usage Analyzer

- Common Places Where Large Files Hide

- How to Safely Decide What to Delete

- Quick Cheat Sheet: Best Commands to Find Large Files on Linux

- Real-World Experience: What Actually Works Best

- Conclusion

Running out of disk space on Linux can feel like your computer is quietly hoarding snacks under the bed. One day everything is fine, and the next day your package manager complains, your Docker build fails, your database refuses to behave, and your system starts acting like it needs a vacation. The good news? Linux gives you several reliable ways to find large files quickly, whether you love the terminal, prefer a visual tool, or just want the fastest path to freeing up storage.

In this guide, you’ll learn how to find large files on Linux using four easy options: the find command, the du command with sort, the interactive ncdu utility, and a graphical disk usage analyzer. These methods work well on popular Linux distributions such as Ubuntu, Debian, Fedora, Rocky Linux, Linux Mint, Arch, and openSUSE. Some commands may vary slightly by distribution, but the core ideas remain the same.

Before deleting anything, remember this golden Linux rule: if you do not know what a file does, do not remove it with heroic confidence. Some large files are safe to delete, such as old downloads, logs, archives, cache files, ISO images, and forgotten backups. Others may belong to databases, virtual machines, containers, or system services. Finding the files is step one. Judging them wisely is step two. Deleting them while half-asleep is step “please don’t.”

Why Large Files Matter on Linux

Large files are not automatically bad. A 20 GB virtual machine image may be perfectly normal. A 9 GB database dump may be useful. A giant video file in your home directory may be the project you forgot to finish. The problem begins when large files hide in places you do not regularly check, such as /var/log, /tmp, ~/Downloads, Docker directories, backup folders, browser caches, or application data directories.

When a Linux partition becomes full, you may see errors like “No space left on device,” failed package updates, broken application launches, stalled logs, or services that refuse to restart. On servers, low disk space can become a serious reliability issue. On desktops, it is mostly annoying, but still capable of turning your workflow into a digital traffic jam.

A smart approach is to start broad, then narrow down. First check overall disk usage with:

This shows mounted filesystems and available space in a human-readable format. Once you know which partition is full, use one of the four methods below to locate the biggest files or directories.

Option 1: Use the find Command to Search by File Size

The find command is one of the most direct ways to locate large files on Linux. It searches through directories based on conditions you define, including file type, name, modification time, owner, and size. If you want to find files larger than a specific size, find is usually the first tool to reach for.

Find Files Larger Than 500 MB

To search your entire system for regular files larger than 500 MB, run:

Here is what the command means:

sudogives permission to search directories your user may not normally access.find /starts searching from the root directory.-type flimits results to regular files.-size +500Mfinds files larger than 500 megabytes.-printdisplays the matching file paths.

You can adjust the size depending on your situation:

The plus sign matters. In -size +1G, the + means “larger than.” Without it, find looks for files matching that size range more narrowly. You can also use -size -100M to find files smaller than 100 MB, though that is less useful when hunting storage goblins.

Show File Sizes with Results

The basic find output shows paths, but not sizes. To include readable sizes, combine find with ls:

This prints file permissions, owner, size, date, and path. If the directory contains filenames with spaces, this method handles them safely because each file is passed individually.

Find the Top Large Files

If you want a sorted list, try:

This command prints file sizes in bytes, sorts them from largest to smallest, and shows the top 20. The 2>/dev/null part hides permission-denied errors so your screen does not become a dramatic wall of complaints.

Best for: quickly finding files above a specific size threshold.

Option 2: Use du and sort to Find Large Directories

Sometimes the problem is not one massive file. It may be a directory full of thousands of medium-sized files. That is where du shines. The du command estimates file and directory space usage, making it ideal for discovering which folders are eating your disk.

Find the Largest Directories in the Current Folder

Move into the directory you want to inspect, then run:

This gives you a readable list of files and folders, sorted by size. To see the largest items at the bottom, leave it as shown. To show the largest first, use:

The options are simple:

duestimates disk usage.-ssummarizes each item instead of listing every nested file.-hmakes sizes human-readable, such as MB or GB.sort -hsorts human-readable sizes correctly.-rreverses the sort order.

Scan One Level Deep

To inspect a larger directory without drowning in details, use:

This shows the size of each top-level item inside /var. It is especially useful because /var often contains logs, caches, package data, container files, and other storage-heavy items.

Include Hidden Files and Folders

One small trap: shell globbing with * does not include hidden files or directories. If you are scanning your home folder, hidden directories such as ~/.cache, ~/.local, and ~/.config may contain significant data. A safer command is:

This includes most hidden and visible items in the current directory. Do not panic if .cache appears near the top. Cache folders often grow large, but you should still review what is inside before deleting anything.

Best for: finding large directories and understanding where disk usage is concentrated.

Option 3: Use ncdu for an Interactive Terminal View

If find is the detective and du is the accountant, ncdu is the friendly storage tour guide. It gives you an interactive, text-based interface for exploring disk usage directly in the terminal. This makes it especially useful on remote servers where you do not have a graphical desktop.

Install ncdu

On Ubuntu or Debian:

On Fedora:

On Arch Linux:

Scan a Directory

To scan your home directory:

To scan the root filesystem:

Once the scan finishes, you can move through directories with the arrow keys, press Enter to open a folder, and quickly see which files or directories are largest. It feels a little like using a file manager from the 1990s, but in the best possible way: fast, focused, and not interested in wasting your time.

Stay on One Filesystem

When scanning from /, you may want to avoid crossing into mounted drives or special filesystems. Use:

The -x option keeps the scan on the same filesystem, which helps avoid accidentally scanning mounted backup drives, network shares, or other partitions.

Best for: exploring disk usage interactively, especially on servers or via SSH.

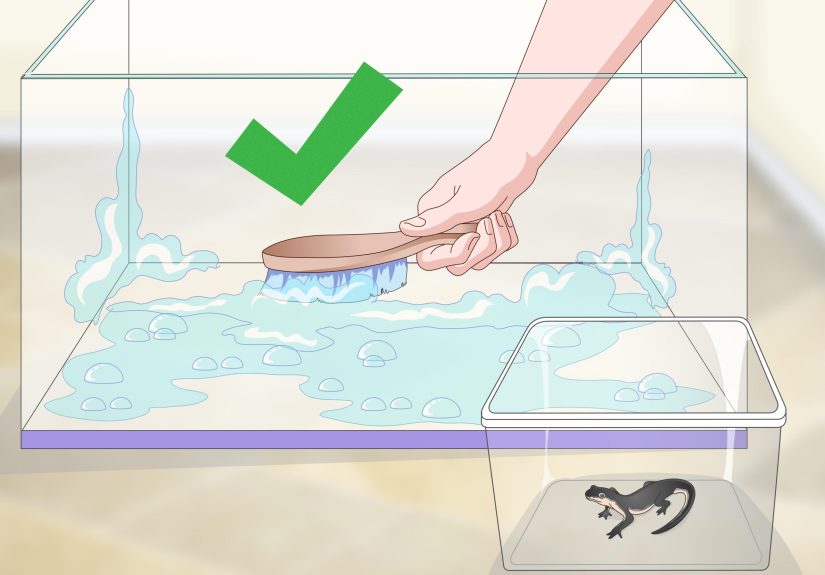

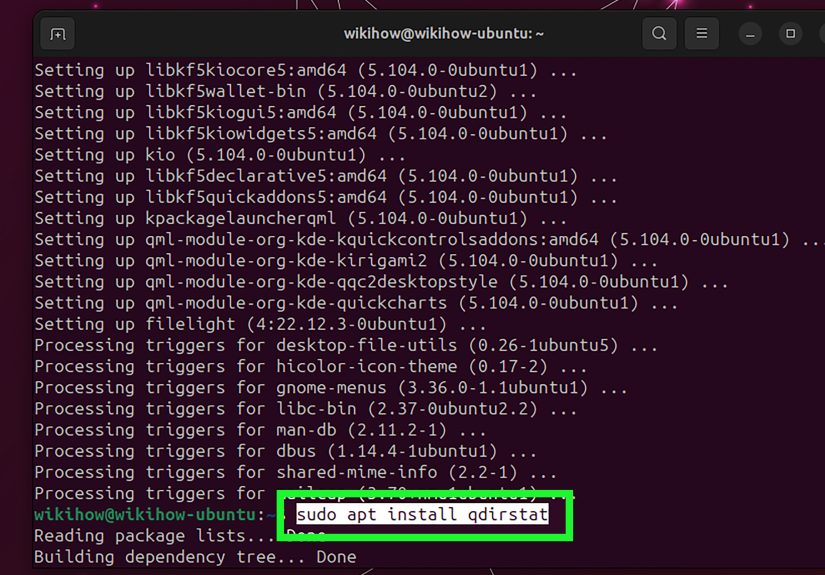

Option 4: Use a Graphical Disk Usage Analyzer

Not everyone wants to solve disk problems by typing commands into a black rectangle. If you prefer a visual approach, Linux desktops offer graphical disk usage tools. On GNOME-based systems, the most common option is Disk Usage Analyzer, also known as Baobab.

Install Disk Usage Analyzer

On Ubuntu or Debian, you can install it with:

On Fedora, use:

You can usually launch it from your application menu by searching for “Disk Usage Analyzer.” You can also run:

Scan Your Home Folder or Entire Disk

Disk Usage Analyzer can scan specific folders, storage devices, and sometimes remote locations depending on your desktop environment and setup. It presents storage usage in a tree view and a visual chart, making it easy to spot oversized folders at a glance.

This option is excellent for newer Linux users because it removes the fear of command-line mistakes. You can click through folders, see what is taking space, and decide what needs attention. It is also helpful for visual thinkers who want to see disk usage patterns instead of reading rows of terminal output.

Best for: desktop users who want a visual way to find large folders and files.

Common Places Where Large Files Hide

Once you know the tools, it helps to know where to look. Large files often gather in predictable places:

~/Downloadsfor installers, ISO images, videos, and archives.~/.cachefor application and browser caches./var/logfor system and service logs./var/cachefor package manager caches./var/lib/dockerfor Docker images, containers, and volumes./tmpfor temporary files that did not get cleaned up.- Project folders containing build artifacts, backups, databases, or media exports.

If Docker is installed, it can consume a surprising amount of space. Instead of manually deleting files inside Docker directories, use Docker’s own cleanup commands after reviewing what is safe:

Be careful with prune commands because they can remove unused containers, networks, images, and build cache. Useful? Yes. Magical? Also yes. Harmless in every situation? Absolutely not.

How to Safely Decide What to Delete

Finding large files is only half the job. Before removing anything, ask a few questions:

- Do I recognize this file?

- Is it in my home directory or a system directory?

- Was it recently modified?

- Does an application or service depend on it?

- Can I move it to external storage instead of deleting it?

- Do I have a backup?

To inspect a file before deleting it, use commands like:

For logs, do not blindly delete active log files. Instead, consider log rotation, service-specific cleanup, or truncation only when appropriate. For example:

This checks the size of systemd journal logs and removes archived journal data older than seven days. That is much safer than stomping through /var/log like a storage-themed monster truck.

Quick Cheat Sheet: Best Commands to Find Large Files on Linux

| Goal | Command |

|---|---|

| Check disk space | df -h |

| Find files over 500 MB | find / -type f -size +500M -print |

| Find large files with sizes | find . -type f -size +100M -exec ls -lh {} ; |

| Show largest folders | du -sh * | sort -hr |

| Scan one directory level | du -h --max-depth=1 /var | sort -hr |

| Use interactive terminal view | ncdu /path |

| Open graphical analyzer | baobab |

Real-World Experience: What Actually Works Best

In everyday Linux use, the best method depends less on what is technically “most powerful” and more on what problem you are facing. When a server is nearly full and you are connected through SSH, ncdu is often the fastest way to understand the mess. It gives you a clear ranked view, lets you move into suspicious directories, and helps you avoid running five different commands just to answer one question: “What ate my disk?”

For emergency checks, I usually start with df -h. That tells me which filesystem is in trouble. Then I move to du -h --max-depth=1 on the suspicious mount point. If /var is huge, I scan /var. If /home is huge, I scan user directories. This top-down method is faster than searching the entire system immediately. It is like checking which room smells smoky before opening every drawer in the house.

The find command is excellent when I already know what kind of file I’m hunting. For example, if a developer workstation is full, I might search for files larger than 1 GB inside the home directory. That often reveals virtual machine disks, database exports, old screen recordings, forgotten archives, or build artifacts. On servers, find can reveal large log files, backup dumps, or application-generated files that should have been rotated or removed automatically.

One lesson learned the hard way: large directory size is not always caused by one dramatic file. Sometimes the culprit is a folder containing hundreds of thousands of small files. In that case, find -size may not show anything impressive, but du or ncdu will clearly reveal the bloated directory. This is common with caches, package builds, temporary uploads, and application session files.

Another practical habit is to avoid deleting directly from the first result list. Instead, inspect the file, identify the owning application, and move the file to a temporary holding area if you are unsure. For personal files, that might mean moving old videos or archives to an external drive. For system files, it usually means using the proper cleanup tool. Package caches, journal logs, Docker data, and database files should be handled with their own commands whenever possible.

On desktop Linux, graphical tools are underrated. Disk Usage Analyzer is not just for beginners. It is genuinely useful when you want a quick visual overview of your home folder. It can reveal patterns that terminal output does not make obvious, such as a media folder growing out of control or one hidden application cache quietly expanding like bread dough in a warm kitchen.

My preferred workflow is simple: use df -h to find the full partition, du to locate the biggest directory, ncdu to explore interactively, and find when I need precise file-size filtering. That combination covers almost every Linux storage mystery without requiring complicated scripts. Once you learn these four options, running out of disk space becomes less of a panic moment and more of a routine cleanup task.

Conclusion

Learning how to find large files on Linux is one of those practical skills that saves time, stress, and possibly a few dramatic sighs at 2 a.m. The find command is perfect for locating files above a specific size. The du and sort combination helps you identify large directories. ncdu gives you a fast interactive terminal interface, while Disk Usage Analyzer offers a friendly graphical view for desktop users.

The safest strategy is to investigate before deleting. Start with broad disk usage, narrow down to directories, inspect large files, and use application-specific cleanup tools when needed. With these four easy options, you can reclaim storage space confidently without treating your Linux system like a mystery box full of questionable decisions.

Note: Commands that scan system directories may require administrator privileges. Always verify a file’s purpose before deleting it, especially under /var, /usr, /etc, database directories, container storage, or application-managed folders.